Run the same prompt through a model twice and you might blame randomness for the difference. That instinct is often wrong. When we tested an identical subject description — “a ceramic bowl with fruit” — across two runs in Nano Banana AI, the results diverged sharply. The first run produced a flat, washed-out composition with indistinct apples. The second, where we restructured the prompt to “a ceramic bowl with glazed oranges, one lemon, dim light from left,” returned a scene with recognizable texture and directional shadowing.

The model hadn’t changed between runs. The prompt had.

After a series of controlled tests using Banana AI’s image generation pipeline, three variables emerged as the primary levers that actually shift output quality: how you structure the prompt, what source asset you feed in, and how you choose to iterate. Each one operates independently, and neglecting any of them degrades results regardless of which model tier you’re running.

Why Two Identical Prompts Can Look Completely Different

The common assumption is that variance comes from model randomness. In practice, the bigger source of variance is prompt formulation. A “flat” prompt — three nouns and an adjective — gives the model more degrees of freedom than most operators realize. The model has to infer scene logic, lighting, depth, and composition from minimal constraints. The result is a diffusion process that fills gaps with whatever latent pattern dominates the training data at that moment.

A “structured” prompt supplies constraints that reduce the number of plausible interpretations. By specifying subject position, lighting direction, and a composition cue, you shrink the solution space. The output becomes more predictable not because the model behaves differently, but because you’ve removed ambiguity from the instruction.

This is the foundation of the three-lever framework: prompt structure, source asset selection, and iteration pattern. Each lever controls a different part of the generation process, and each can be tested in isolation.

Lever 1: Prompt Structure – Syntax Over Semantics

Banana AI, like most transformer-based diffusion models, weights early tokens more heavily during attention computation. Words placed at the beginning of the prompt anchor the scene. Modifiers that appear later influence detail, but they do so within the bounds set by the first few tokens.

We ran a side-by-side test using Nano Banana AI to compare two prompt formats. The first was a flat list: “woman, red dress, city street, night.” The second used a structured template: “young woman in red dress [subject], standing under a streetlamp on a wet pavement [environment], shallow depth of field, warm window light in background [composition].” The structured prompt produced consistent framing across five generations. The flat prompt drifted: some outputs showed a full-body shot, others a close-up, and one omitted the woman entirely in favor of a generic street scene.

The recommendation that emerged from these tests is a repeatable syntax: [Subject] + [Key attribute] + [Lighting/Environment] + [Composition cue], with an optional style tag appended last. This ordering aligns with how the model processes input and reduces subject drift without requiring longer prompts.

One limitation worth noting: this syntax works reliably for single-subject scenes. For multi-subject or complex narratives, the pattern may need adjustment, and we did not test those scenarios at depth.

Lever 2: Source Asset Selection – What You Start With Dictates What You End With

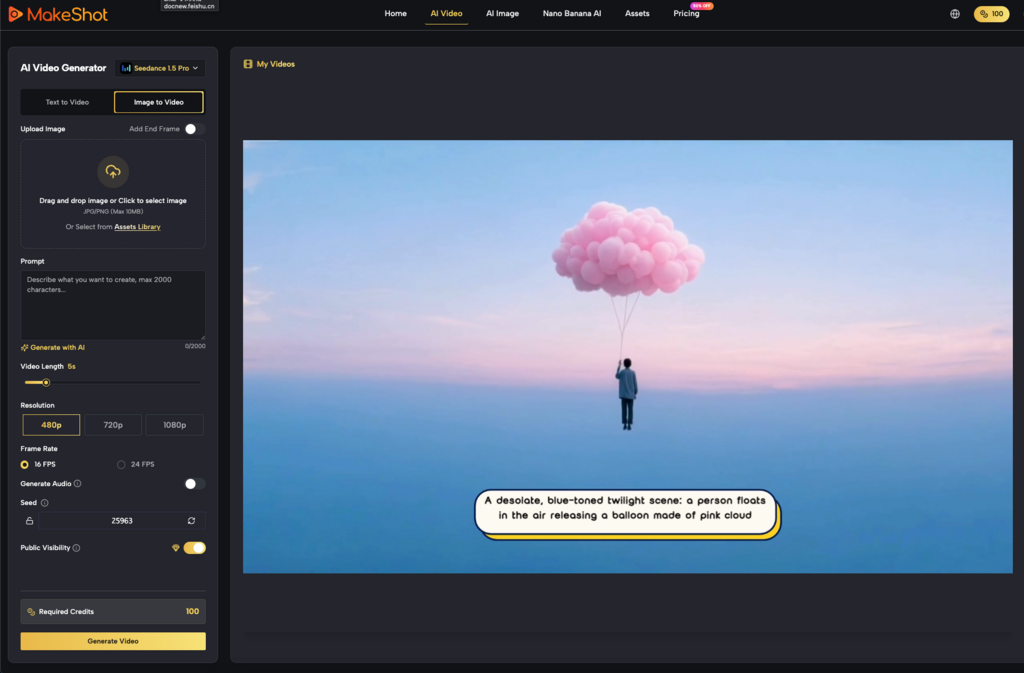

When using image-to-image workflows in Nano Banana AI, the source image is not a suggestion — it is a structural constraint. The model interprets the source as both composition reference and color base. No amount of prompt refinement can fully override a poor source.

We tested this by feeding two different source images into the same target prompt. The first source was an overexposed product photo with clipped highlights and compressed shadows. The second was a studio shot with even exposure, visible midtones, and no blown-out areas. The target prompt was identical in both runs: “ceramic vase on a wooden table, soft north light, muted greens and grays.”

The overexposed source produced outputs with washed-out highlights that no amount of prompt darkening could recover. The well-lit source preserved texture in the vase’s glaze and maintained the intended color palette. The model treated the source’s contrast range as a default it could not fully override.

For operations leads building repeatable pipelines, the practical takeaway is to pre-process source assets before feeding them into the model. Cropping to 1:1, boosting midtones to preserve texture, and removing text overlays or watermarks all reduce the number of artifacts the model has to “fix” during generation. These steps are cheap, but they have an outsized effect on usable yield.

Lever 3: Iteration Pattern – Single Shot vs. Progressive Refinement

There are two common approaches to iterating with AI image generation. The first is to run a single prompt multiple times and pick the best result. The second is to generate an initial batch, select a candidate, and then refine the prompt around that candidate across successive rounds.

We tested both methods using the same subject and a target output count of ten usable assets. The single-shot approach — ten generations from one prompt — yielded roughly two outputs that matched the brief. The progressive refinement approach — three cycles of generation with one variable changed each cycle (first lighting, then composition, then style) — returned approximately six usable assets from the same total generation budget.

The difference is not a matter of luck. Progressive refinement lets the operator diagnose which variable is causing failure. A preliminary batch that consistently misses lighting tells you to adjust the lighting token, not the subject. The single-shot method isolates no variable, so all failures look like model randomness.

This pattern depends heavily on the task. For tight briefs with specific brand guidelines, progressive refinement wins. For brainstorming or concept exploration, single-shot batching is faster and covers more visual territory. Neither method is superior across all use cases, and the appropriate choice depends on whether the goal is consistency or discovery.

What We Cannot Conclude About Banana AI on This Evidence

These tests were conducted using Nano Banana AI on a single hardware configuration. Results may differ when using the full Banana AI tier or across different credit and resolution settings. The model architecture and sampling steps vary between tiers, and we cannot assume these findings generalize without replication.

Output quality is also inherently subjective. An image considered “usable” for a social media post may fail for a hero banner or print application. Our tests leaned toward production-grade criteria — good composition, consistent lighting, subject integrity — but those criteria do not cover all workflows.

Finally, the iteration pattern comparison did not control for prompt length or seed randomization across cycles. Repeating this test across more seeds would improve statistical confidence, but for the purposes of this article, the directional pattern was strong enough to be actionable.

The point of isolating these three levers is not to claim universal rules. It is to give operators controllable inputs in a system that often feels like a black box. Format the prompt deliberately, curate the source asset, and choose the iteration pattern that fits the task. Those three choices will determine the output far more than any model version number will.